OpenAI CLIP: AI Models that Support Images and Text at the Same Time

What is OpenAI CLIP?

Openai CLIP is an AI neural network model developed by the OpenAI team on January 5, 2021 that can recognize and link images and text. CLIP is based on a multi-modal model of image and text parallelism, which can achieve image retrieval, geolocation, video action recognition, etc. It can also combine English language concept knowledge with image semantic knowledge, and encode text and visual information into multiple-modality embedded in the space. At present, the actual use effect brought by CLIP is of great significance to the further advancement of computer vision technology.

Price: Free

Tag: Neural Network Model

Release Time: January 5, 2021

Developer(s): OpenAI

Share OpenAI CLIP

OpenAI CLIP Function

- It retrieves the image most relevant to the sentence

- It can concatenate text with images

Limitations of OpenAI CLIP

Although CLIP is flexible and efficient, and can recognize common objects well, it does not perform well when faced with more complex and abstract objects, especially in zero-shot mode that has not been explicitly trained.

OpenAI CLIP Download

- Enter the official website of OpenAI CLIP

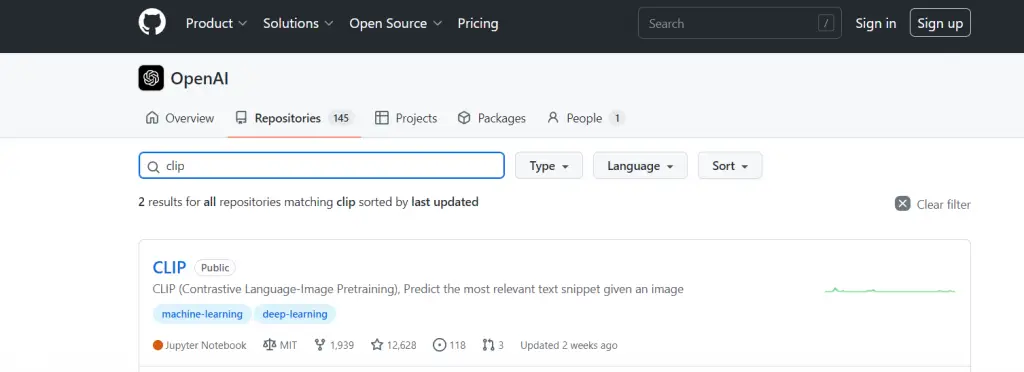

- Go to the bottom of the page, find GitHub and click to enter

- Click Repositories and enter CLIP in the search click Find and click to enter the CLIP file

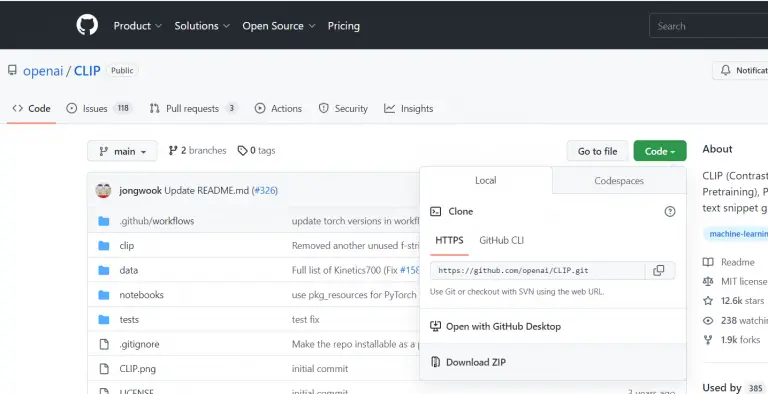

- Click Code and click Download ZIP to download the compressed package

OpenAI CLIP Pricing

OpenAI CLIP is a free open source software, and any user can use the CLIP model for free.

FAQ

Practical applications of OpenAI’s CLIP include image search and reverse image search, reducing the complexity for developers to focus on other tasks, and learning visual representations from natural language data. It can also be used to link English language conceptual knowledge with image semantic knowledge combined.

OpenAI is a research organization consisting of Sam Altman , Ilya Sutskever , Greg Brockman , Olivier Grabias , Wojciech Zaremba , Elon Musk , John Schulman , and Andrej Karpathy.

Yes, OpenAI CLIP is a free open source model, anyone can go to the official website to download and use it.