Unmasking Zvbear: The Mastermind Behind the Taylor Swift AI Deepfake Scandal

In the digital era where AI tools have become integral to our online experiences, the controversy surrounding Zvbear and the AI-generated deepfake images of Taylor Swift has ignited a significant debate about the ethical use of artificial intelligence. This incident not only highlights the potential for AI to infringe upon personal privacy and dignity but also underscores the urgent need for robust content moderation on social media platforms and a reevaluation of legal frameworks to protect individuals against digital exploitation.

The Zvbear controversy, revolving around the AI-generated deepfake images of Taylor Swift, serves as a poignant reminder of the dual nature of artificial intelligence.

Table of Contents

Zvbear and Taylor Swift AI Deepfake Controversy

In the vast expanse of the internet, where innovation and creativity meet at the crossroads of technology, a controversy erupted that captured the attention of millions worldwide. At the heart of this storm was Zvbear, a pseudonym for Zubear Abdi, who became infamously linked to a series of AI-generated deepfake images of the global pop icon, Taylor Swift. These images, explicit in nature and created without consent, sparked a fierce debate over the ethical use of artificial intelligence and the protection of individual privacy online. Zvbear’s actions, which involved distributing these images across various social media platforms, including the likes of 4chan, Reddit, and X (formerly known as Twitter), not only violated the digital persona of Taylor Swift but also raised alarming questions about the ease with which deepfake technology can be misused to create realistic and harmful content.

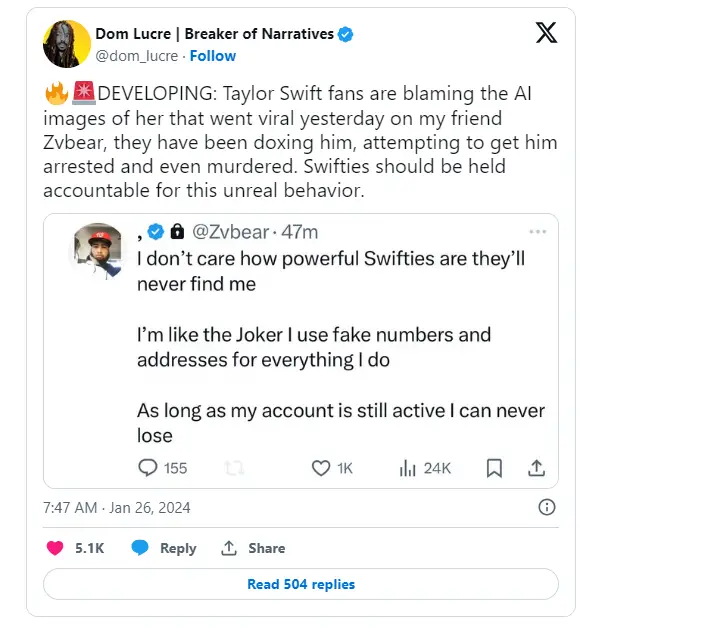

The backlash was swift and severe, as fans of Taylor Swift, collectively known as Swifties, alongside concerned netizens, rallied against the spread of these non-consensual images. The controversy shed light on the darker side of AI capabilities, where the line between real and artificial becomes disturbingly blurred. As the images proliferated, the outcry led to a broader discussion on the need for stringent regulations and ethical guidelines to govern the use of deepfake technology. The incident with Zvbear underscored a critical moment in the digital age, highlighting the urgent need for a balance between technological advancement and the safeguarding of personal integrity and privacy in the online realm.

Also read:Where to See Taylor Swift AI Photos? And It’s Controversy,The Taylor Swift AI Outrage: Fans Rally Against Deepfake Dilemma

Who is Zubear Abdi, aka Zvbear?

Zubear Abdi, more widely recognized by his online alias Zvbear, emerged as a contentious figure in the digital world following his association with the creation and dissemination of AI-generated deepfake images of Taylor Swift. A 27-year-old of Somali descent residing in Ontario, Canada, Zvbear’s digital footprint expanded across various social media platforms, where he was known for sharing explicit content. His activities, particularly those involving the manipulation of images to create explicit content without consent, thrust him into the spotlight, sparking widespread controversy and debate over the ethical use of digital tools and the protection of individuals’ digital identities.

The revelation of Zvbear’s actions led to significant public backlash, resulting in the deactivation of his social media accounts and a loss of followers, reflecting the community’s strong stance against the misuse of AI for creating non-consensual explicit content. This incident not only highlighted the potential for harm inherent in digital anonymity and the misuse of AI technology but also raised questions about the responsibilities of social media users and platforms in preventing such abuses. Zvbear’s case serves as a cautionary tale about the darker potentials of digital innovation when divorced from ethical considerations and respect for individual privacy.

What is “Taylor Swift AI”?

“Taylor Swift AI” refers to the series of artificial intelligence-generated images that depicted the pop icon Taylor Swift in various explicit scenarios without her consent. These deepfake images were crafted using advanced AI technologies to superimpose Swift’s likeness onto bodies in compromising positions, creating highly realistic and yet entirely fabricated visuals. The controversy surrounding these images not only brought to light the capabilities of AI in creating lifelike content but also sparked a significant debate on the ethical implications of using such technology to infringe upon an individual’s privacy and dignity.

What is Deepfake?

Deepfake technology represents a sophisticated form of artificial intelligence that combines deep learning algorithms with fake content creation, enabling the production of highly convincing fake videos or images. This technology manipulates or fabricates visual and audio content with a high potential to deceive, making it difficult to distinguish between real and manipulated content. Initially emerging as a tool for creating realistic media content, deepfakes have increasingly become a source of concern due to their potential misuse in spreading misinformation, creating non-consensual explicit content, and undermining the trustworthiness of digital media.

The Dangers of Deepfakes

Deepfake technology, while a marvel of artificial intelligence, harbors a dark side that poses significant risks to individuals and society.

- Misinformation and Manipulation: Deepfakes can be used to create false narratives or alter the truth, potentially influencing public opinion and swaying political outcomes.

- Non-consensual Content: They can generate explicit content without an individual’s consent, leading to severe emotional and psychological distress for the victims.

- Identity Theft: Deepfakes can impersonate individuals, leading to identity theft and fraud, damaging reputations and causing financial harm.

- Social and Political Unrest: Manipulated content can incite social and political unrest by spreading false information or discrediting public figures.

- Erosion of Trust: The prevalence of deepfakes can erode trust in digital content, making it challenging to discern what is real and what is fabricated.

- Cyberbullying and Harassment: They can be weaponized for cyberbullying and harassment, targeting individuals with malicious intent.

- Legal and Ethical Challenges: Deepfakes raise complex legal and ethical issues, challenging existing frameworks around consent, copyright, and defamation.

X Can't Stop Spread of Explicit, Fake AI Taylor Swift Images

Platform Limitations

Despite efforts by X (formerly Twitter) and other social media platforms to moderate content, the spread of explicit, fake AI-generated images of Taylor Swift highlights significant challenges in content moderation. The rapid dissemination of these images underscores the limitations of current algorithms and human moderation teams in identifying and removing deepfake content before it goes viral, illustrating the need for more advanced detection technologies and stronger content moderation policies.

User Ingenuity

The individuals behind the creation and distribution of these deepfake images often employ sophisticated methods to bypass platform safeguards, such as altering image metadata or using coded language. This ingenuity in evading detection complicates efforts by platforms like X to effectively police content, necessitating a continuous evolution of moderation strategies to keep pace with new tactics employed by malicious actors.

Legal and Ethical Gray Areas

The spread of deepfake images on platforms like X also brings to light the legal and ethical gray areas surrounding digital content. Current laws may not fully address the nuances of AI-generated content, leaving platforms in a difficult position when it comes to enforcement. This gap highlights the urgent need for updated regulations and clearer guidelines that specifically address the challenges posed by deepfake technology.

Community Response

The role of the community in reporting and flagging inappropriate content is crucial, yet the sheer volume of uploads and the speed at which deepfake images can spread often overwhelm these community-driven safeguards. While user reports play a vital role in content moderation, the Taylor Swift AI image scandal illustrates the limitations of relying solely on community policing to stem the tide of harmful deepfake content.

'Protect Taylor Swift' Campaign on Social Media

Swifties Unite

The ‘Protect Taylor Swift’ campaign emerged as a powerful movement on social media, driven by the pop star’s dedicated fan base, known as Swifties. In response to the spread of explicit, fake AI images of Taylor Swift, fans worldwide rallied together, using the hashtag #ProtectTaylorSwift to denounce the deepfakes and support the artist. This collective action showcased the strength of fan communities in standing against online harassment and advocating for the privacy and dignity of their beloved artist, highlighting the positive impact of solidarity in digital spaces.

Viral Hashtag Movement

The campaign quickly gained momentum, with the hashtag #ProtectTaylorSwift going viral across various social media platforms. Fans used the hashtag to spread awareness about the deepfake controversy, call out the unethical use of AI, and urge others to report and not share the manipulated images. The viral nature of the campaign played a crucial role in amplifying the message, drawing attention from the broader public and media outlets, and putting pressure on social media platforms to take action against the spread of such harmful content.

Celebrity and Public Support

The ‘Protect Taylor Swift’ campaign transcended the fan community, garnering support from celebrities, public figures, and the general public who recognized the broader implications of the issue. This widespread backing lent additional weight to the campaign, emphasizing the universal need for respectful online interactions and the protection of individuals’ rights in digital environments. The collective voice of fans, celebrities, and concerned netizens underscored the societal rejection of deepfake abuse and the importance of maintaining ethical standards in the use of technology.

Online Outcry and Potential Litigation

Public Outrage

The dissemination of AI-generated explicit images of Taylor Swift on social media platforms like X sparked widespread public outrage. The backlash was not limited to Swift’s fanbase; it extended across the digital community, with users condemning the violation of privacy and the ethical misuse of AI technology. This collective disapproval highlighted the growing concern over digital consent and the potential harm caused by deepfakes, emphasizing the need for a more responsible online culture.

Legal Implications

The controversy surrounding the deepfake images has raised significant legal questions, particularly regarding the creation and distribution of non-consensual explicit content. Reports suggest that Taylor Swift’s legal team is considering action against the perpetrators, which could set a precedent for future cases involving deepfake technology. This potential litigation underscores the urgent need for legal frameworks to adapt to the challenges posed by advanced digital technologies, ensuring individuals’ rights are protected in the face of evolving online threats.

The Role of Social Media Platforms in Content Moderation

Enhancing Detection Capabilities

Social media platforms are at the forefront of the battle against deepfake content, necessitating the development of more sophisticated detection tools. The rapid advancement of AI technology requires platforms to continuously update and refine their content moderation systems to identify and remove harmful deepfake content effectively. Investing in AI-driven moderation tools and collaborating with AI experts can help platforms stay ahead of malicious actors.

Policy Evolution and Enforcement

As deepfake technology evolves, so too must the policies governing content on social media platforms. Clear, comprehensive guidelines that explicitly address deepfakes and non-consensual content are essential. Platforms must ensure these policies are rigorously enforced, with swift action taken against violations to deter potential offenders. Regular policy reviews and updates, in consultation with legal and ethical experts, can help platforms adapt to the changing digital landscape.

Community and User Education

Educating users about the dangers of deepfakes and the importance of ethical online behavior plays a crucial role in content moderation. Social media platforms can lead initiatives to raise awareness about the impact of deepfakes and encourage responsible content sharing. By fostering an informed user base, platforms can empower users to contribute to a safer online environment, complementing technical and policy-based moderation efforts.

Conclusion

The Zvbear controversy, centered around the AI-generated deepfake images of Taylor Swift, serves as a stark reminder of the double-edged sword that is artificial intelligence. While AI tools offer unprecedented opportunities for creativity and innovation, their misuse, as demonstrated in this case, poses serious threats to individual privacy, security, and the integrity of online content. The collective response from the public, the ‘Protect Taylor Swift’ campaign, and the potential legal actions underscore the necessity for a collaborative effort among tech companies, legal systems, and the online community to establish a safer digital environment. As we advance further into the age of AI, it is imperative that ethical considerations and respect for human dignity guide the development and application of these powerful tools.