How to Fighting Deepfakes in 2024? The Ultimate Guide

In an era where the digital landscape is continuously evolving, the emergence of deepfake technology has presented unprecedented challenges. These AI-generated forgeries, capable of creating hyper-realistic videos and images, blur the lines between reality and fiction, raising significant concerns about privacy, security, and the integrity of information. These AI image generators and AI video generators, which can convincingly replicate the likeness of individuals, have raised significant ethical and legal concerns. As we navigate through this complex terrain, the development and application of AI tools for detecting and combating deepfakes have become crucial.

In the digital age, deepfake technology challenges our trust in the authenticity of information.

Table of Contents

What is deepfakes?

At their core, deepfakes are hyper-realistic videos or images generated using advanced artificial intelligence and machine learning techniques. This technology can make anyone appear to say or do things they never actually did, creating a parallel universe where the impossible seems mundane.

The term “deepfakes” itself is a blend of “deep learning” and “fakes,” pointing directly to its roots in deep learning algorithms, a subset of machine learning. These algorithms train on vast datasets of real images and videos, learning to replicate the nuances of human expressions, speech, and movements. The first notable instances of deepfakes surfaced on the internet in late 2017, quickly capturing public attention for their potential to entertain, but more alarmingly, to deceive. From swapping celebrities into movies they never starred in, to more sinister uses like spreading misinformation or creating non-consensual explicit content, deepfakes represent a double-edged sword, cutting into the fabric of digital trust.

Why should we fight against deepfakes?

The fight against deepfakes transcends mere digital hygiene; it’s a battle for the very essence of truth and trust in the digital age. These AI-crafted illusions, capable of mimicking reality with alarming precision, pose a multifaceted threat. They can be weaponized to undermine democratic processes by fabricating political statements, invade personal privacy through unauthorized use of likenesses, and propagate misinformation, eroding public trust in media. The potential for deepfakes to fuel cyberbullying, extortion, and social discord further underscores the imperative to develop robust detection and regulation mechanisms. In essence, combating deepfakes is crucial to preserving the integrity of digital communication, protecting individual rights, and maintaining the foundational trust that underpins our increasingly interconnected society.

The risk of deepfakes

The advent of deepfakes has ushered in a new era of digital deception, where the line between reality and fabrication becomes increasingly blurred.

- Privacy Violations: Individuals’ likenesses can be used without consent, leading to unauthorized and potentially damaging representations in digital content.

- Misinformation and Disinformation: Fabricated videos and images can spread false information, misleading the public on critical issues and events.

- Political Manipulation: Deepfakes can be used to create false narratives or statements by political figures, influencing public opinion and election outcomes.

- Financial Fraud: Scammers can use deepfakes to impersonate trusted figures in finance, tricking individuals and organizations into fraudulent transactions.

- Social Discord: Manipulated content can incite violence, spread hate, and disrupt societal harmony by depicting false or inflammatory actions by individuals or groups.

- Cyberbullying and Extortion: Deepfakes can be weaponized for personal attacks, including non-consensual explicit content, leading to emotional distress and reputational damage.

- Undermining Journalism and Legal Proceedings: Fabricated evidence and false narratives can challenge the credibility of journalistic reporting and legal investigations, eroding public trust in these institutions.

The Applications of Deepfakes technology

While deepfakes are often highlighted for their potential misuse, this technology also harbors a plethora of applications across various fields, harnessing its power for creative, educational, and innovative purposes.

- Entertainment and Media: Deepfakes can revolutionize filmmaking and video content creation, allowing for seamless actor dubbing, de-aging, or even resurrecting historical figures for performances.

- Education and Training: By recreating historical speeches or events with high accuracy, deepfakes can provide immersive learning experiences and enhance educational content.

- Art and Creativity: Artists and creators can employ deepfake technology to push the boundaries of creativity, generating new forms of digital art and storytelling.

- Personalization in Advertising: Deepfakes enable highly personalized advertising campaigns by adapting spokespersons or scenarios to resonate with diverse audiences.

- Voice Synthesis and Translation: Deepfake audio technology can be used for real-time language translation, making content accessible across linguistic barriers and enhancing global communication.

- Face Replacement in Video Conferencing: Enhancing privacy and personalization in remote communication, deepfakes can modify appearances in real-time during video calls.

- Forensic and Law Enforcement: In certain contexts, deepfakes can aid in crime scene reconstruction or simulate events based on witness testimonies, providing visual aids for investigations and legal proceedings.

Cases Harmed by Deepfake Technology

1.Taylor Swift Deepfake Incident

Explicit deepfake images of Taylor Swift circulated on social media, viewed millions of times, sparking legislative calls in the US.

- Widespread Distribution: The images spread across platforms like X and Telegram, reaching millions.

- Legislative Response: US politicians, recognizing the gravity, called for laws criminalizing deepfake creation.

- Emotional and Reputational Damage: The incident highlights the potential for deepfakes to cause significant harm to individuals’ well-being and reputation.

- Platform Action: Social media platforms took steps to remove the images and block related searches, underscoring the challenge of controlling such content.

2.Scarlett Johansson: Battling Unauthorized Digital Likeness

Scarlett Johansson’s ordeal with deepfake technology, where her image was non-consensually used in explicit content, highlights the dark side of digital manipulation.

- Widespread Misuse: Her image has been extensively exploited in deepfake pornography, impacting her personal and professional life.

- Legal Hurdles: Johansson confronts significant legal challenges in tackling these violations due to the anonymity of the creators.

- Call for Action: Her situation has sparked demands for stricter regulations and laws to combat deepfake misuse.

3.Emma Watson: An Advocate's Image Compromised

Emma Watson’s image has been tarnished by deepfakes, clashing with her real-life advocacy for women’s rights, thus invading her privacy and undermining her efforts.

- Contradiction to Advocacy: Deepfakes of Watson starkly oppose her advocacy for women’s rights and privacy.

- Impact on Public Image: The creation of explicit deepfakes has detrimental effects on her reputation and the causes she supports.

- Need for Digital Rights: Watson’s case emphasizes the necessity for robust protection of individuals’ digital rights and integrity.

What is AI Deepfake Detector Tools?

AI Deepfake Detector Tools are sophisticated software solutions designed to identify and differentiate between authentic and AI-manipulated content. Leveraging advanced artificial intelligence and machine learning algorithms, these tools scrutinize videos and images for subtle inconsistencies and anomalies that typically go unnoticed by the human eye, such as irregular facial expressions, unnatural movements, or inconsistent lighting. Their primary objective is to safeguard the integrity of digital media by exposing deepfakes, thereby mitigating the risks associated with misinformation, identity theft, and other forms of digital deception. As deepfake technology evolves, the development and refinement of these detection tools become increasingly crucial in the ongoing effort to maintain trust and authenticity in the digital realm.

3 Best Deepfake Detector Tools & Techniques in 2024

1. Sentinel: Guarding Against Digital Deception

Sentinel stands as a beacon of defense in the digital realm, offering AI-based protection primarily to democratic governments, defense agencies, and enterprises. This platform distinguishes itself by analyzing digital media uploaded via its website or API, swiftly identifying AI forgeries and providing detailed visualizations of manipulations. Sentinel’s prowess lies in its advanced AI algorithms that meticulously dissect media to pinpoint alterations, ensuring the integrity of digital content remains uncompromised.

Pros:

- High accuracy in detecting deepfakes.

- User-friendly interface for straightforward media analysis.

Cons:

- Primarily tailored for organizations, limiting accessibility for individual users.

- Relies on high-quality input data for optimal performance.

Best Suited For: Large organizations and governmental bodies seeking robust digital media verification tools.

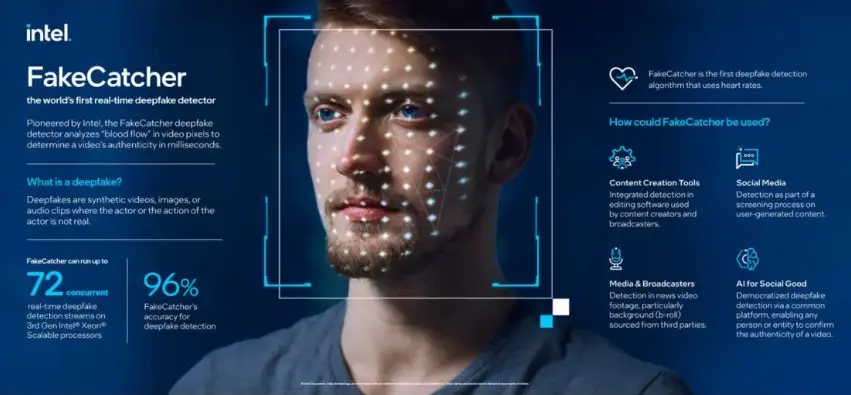

2. Intel's Real-Time Deepfake Detector: Instantaneous Integrity Insights

Intel’s FakeCatcher technology heralds a new era in deepfake detection with its real-time capabilities, boasting an impressive 96% accuracy rate. This innovative tool, developed in collaboration with the State University of New York at Binghamton, leverages Intel hardware and software to analyze videos through a web-based platform. Its unique approach involves detecting the natural “blood flow” in video pixels, a subtle yet telling sign of human life absent in deepfakes, allowing for instant verification of content authenticity.

Pros:

- Real-time detection provides immediate results.

- High accuracy enhances trust in content verification.

Cons:

- Dependency on specific Intel hardware and software may limit widespread adoption.

- May not perform as well with low-quality or heavily edited videos.

Best Suited For: Media companies and content creators in need of immediate verification to maintain content integrity.

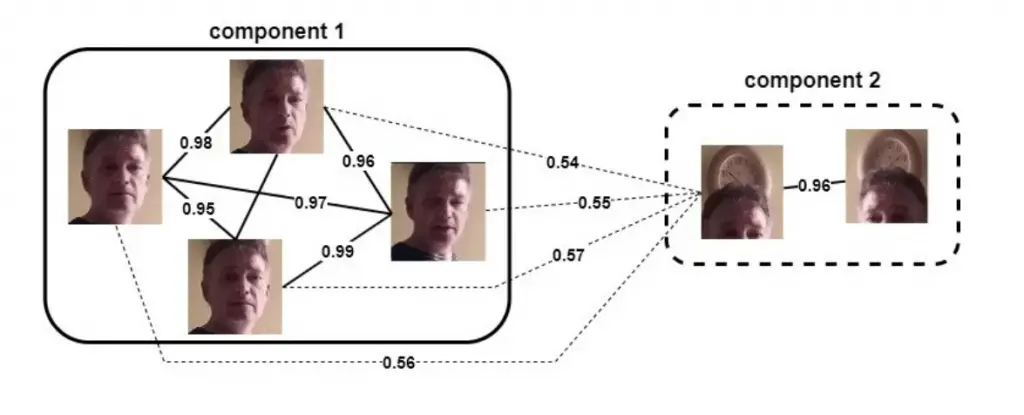

3. WeVerify: The Truth Sleuth

WeVerify emerges as a comprehensive solution for debunking disinformation and verifying digital content. This project excels in analyzing and contextualizing social media and web content, employing cross-modal verification, social network analysis, and a blockchain-based database of known fakes. Its strength lies in its holistic approach, combining human expertise with AI to provide a nuanced understanding of digital content’s authenticity, making it an invaluable tool in the fight against digital falsehoods.

Pros:

- Comprehensive analysis tools for in-depth verification.

- Blockchain database provides a reliable record of known fakes.

Cons:

- Complexity of tools may deter non-technical users.

- Effectiveness dependent on the continuous updating of its fake database.

Best Suited For: Journalists, researchers, and fact-checkers dedicated to uncovering the truth in digital media.

How to combat deepfakes?

Step 1: Increase Public Awareness: Educate the public about the existence and implications of deepfakes to foster a more discerning online audience.

Step 2: Utilize Detection Tools: Leverage advanced deepfake detection tools and software to identify and flag manipulated content.

Step 3: Implement Strict Regulations: Advocate for and implement stringent legal frameworks that penalize the creation and distribution of malicious deepfake content.

Step 4: Promote Digital Literacy: Encourage digital literacy programs that teach individuals how to critically evaluate online content and recognize deepfakes.

Step 5: Foster Collaboration: Encourage collaboration between tech companies, policymakers, and academic researchers to develop more effective deepfake detection and prevention strategies.

Step 6: Secure Personal Data: Practice and promote better personal data security measures to reduce the likelihood of one’s images or videos being used to create deepfakes.

Step 7: Support Victims: Provide support and resources for individuals who have been victimized by deepfakes, including legal assistance and mental health support.

Step 8: Continuous Technological Advancement: Invest in ongoing research and development to enhance the capabilities of deepfake detection technologies and stay ahead of evolving threats.

FAQs Related to Deepfake

While deepfake detection tools are continually improving, achieving 100% accuracy is challenging due to the rapid advancement of deepfake-generating technologies.

Yes, deepfake technology can be used ethically in fields like entertainment, education, and art, provided it’s done with consent and for purposes that do not deceive or harm.

Individuals can protect themselves by being cautious about sharing personal images and videos online and by using privacy settings on social media platforms to control who has access to their content.

Conclusion

The advent of deepfakes represents a formidable challenge in the digital age, testing the resilience of our information integrity and personal privacy. However, with the concerted efforts of technology developers, policymakers, and the informed public, we can mount a robust defense against this threat. By leveraging advanced AI detection tools, advocating for stringent regulations, and fostering a culture of digital literacy and ethics, we can mitigate the risks posed by deepfakes. The journey to combat deepfakes is ongoing, requiring vigilance, innovation, and collaboration to preserve the sanctity of truth in our digital world.